Beyond the Warehouse: Why Modern Data Management Breaks Down Across Systems

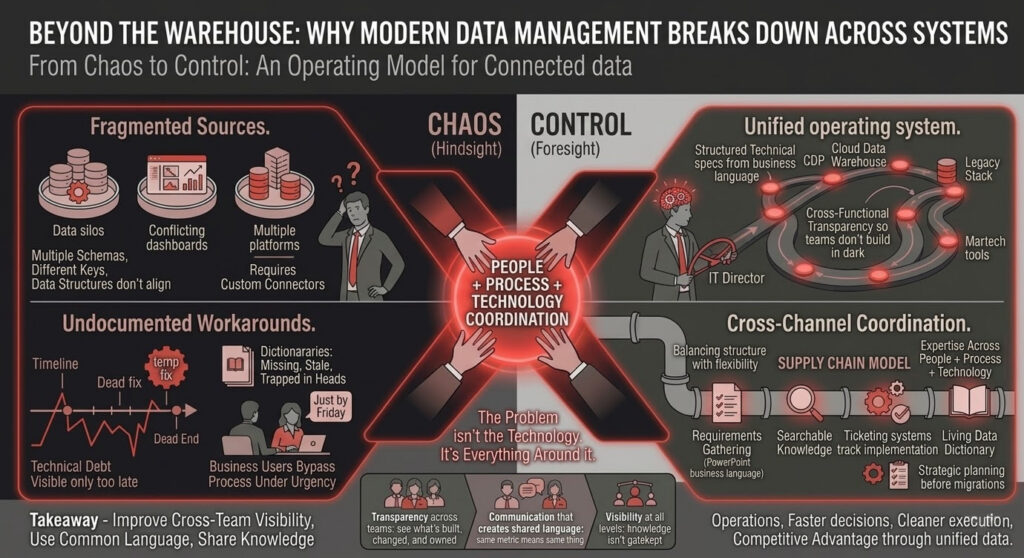

Organizations invest heavily in modern data management tools—CDPs, cloud data warehouses, analytics platforms—yet IT Directors still end up staring at the same symptoms: fragmented sources, inconsistent definitions, conflicting dashboards, and teams building parallel versions of reality.

That’s the frustration. The spend went up. The clarity didn’t.

And here’s the punchline most organizations learn the expensive way: the problem usually isn’t the technology. It’s everything around it. Integration that doesn’t actually integrate. Governance that exists on paper but collapses under urgency. Documentation that’s missing, stale, or trapped in someone’s head. Communication that breaks as soon as “business language” has to become “technical specs.”

Data management fails when it’s treated like a platform purchase instead of an operating model.

The problem: data investments aren’t delivering

IT Directors are under pressure to make data usable. Fast. Reliable. Secure. And ideally, self-serve.

But even in well-funded organizations, the same outcomes keep showing up:

- Systems don’t “talk” without custom work

- Data structures don’t align across tools

- Teams use different keys, definitions, and frameworks

- Technical debt becomes visible only when it’s too late (migrations are a great spotlight)

The promise is a clean pipeline. The reality is patchwork.

And patchwork isn’t just messy. It’s expensive. It slows decision-making, increases risk, and quietly drains ROI from every analytics and martech investment tied to that data.

What people think data management means (and where that breaks down)

Most people picture data management as a tidy warehouse. A few tables. Everything relational. Everything connected. Everyone confident.

That mental model works… right up until you introduce a second platform.

Or a third. Or six.

Because now you’re dealing with different requirements, different schemas, different keys, different governance rules, and different teams who each have “good reasons” for doing it their way.

This is where the breakdown usually starts:

- Multiple platforms require different data structures

- Business users bypass processes to meet urgent deadlines

- Legacy systems reflect outdated organizational structures

- Documentation doesn’t exist—or isn’t maintained

The moment business urgency enters the room, governance is the first casualty. The request is always reasonable: “We just need it by Friday.” The impact is rarely visible until later: a new exception, a new workaround, a new rule that only one person remembers.

Multiply that over time and you don’t get a modern data ecosystem. You get chaos with a cloud logo on it.

The real definition: data management across teams, tools, and platforms

So, what is data management when data moves across an entire organization?

It’s not just storage. It’s not just cleaning. It’s not just pipelines.

In practice, it starts upstream with requirements gathering—often in PowerPoint, using business language. Then it has to become structured technical specifications engineers can build against. Then it has to move through systems and teams that each have their own workflows and constraints, including:

- Ticketing systems that track implementation

- Cloud-based documentation tools for searchable knowledge

- Multiple specialized teams with different needs and incentives

- Different platforms requiring unique approaches

At that scale, data management is less like “maintaining a database” and more like running a supply chain.

And supply chains don’t work on hope. They work on coordination.

The three foundations that keep it from collapsing

To make data management work across an organization, three things have to be true:

- Transparency across teams: people can see what’s being built, changed, and owned

- Communication that creates shared language: the same metric means the same thing everywhere

- Visibility at all levels: knowledge isn’t gatekept by individuals

Without these, you can buy the best platforms in the world and still fail.

Why “modern tools” don’t solve fragmentation by themselves

New tools can be powerful. They can also expose how fragile your underlying structure really is.

Organizations often aren’t prepared for implementation complexity. A gap opens up between decision-makers selecting a platform and technical teams discovering compatibility issues with existing infrastructure. That gap gets filled with quick fixes.

Then more quick fixes.

Then permanent quick fixes.

Common failure points show up fast:

Incompatibility creates patchwork

A new platform doesn’t automatically play nicely with your existing warehouse, CDP, or legacy stack. So you end up building “connectors,” “bridges,” and “temporary” solutions that become structural.

The demo illusion

Vendors demo products in pristine environments where everything works. Your reality is decades of technical debt, inconsistent conventions, and undocumented workarounds. The product isn’t lying. The context is missing.

Legacy debt doesn’t reveal itself until migrations

Established companies have often “bandaided” their data over time. Some knowledge lives only in seasoned resources acting as institutional memory. Newer employees don’t even know which structures were supposed to be unified but exist separately because of historical constraints.

Migrations are where this debt surfaces. What looked like an upgrade becomes a multi-year remediation effort.

Where data silos derail everything

Data silos aren’t just a technical problem. They’re an organizational one.

When departments have competing priorities, governance frameworks break down. Teams create their own structures to meet their own needs. They’re not doing it out of spite. They’re doing it because they don’t have visibility into what other teams are building—or they can’t afford to wait.

That’s how you end up with isolated “petri dishes of different data structures” that work against unified insights. And it creates predictable pain:

- Duplicated work and wasted effort

- Inconsistent analysis because teams take different approaches

- Collaboration failures because teams can’t support each other

- Quality control gaps due to lack of standardized validation

Silos don’t just slow you down. They make you less sure of what’s true.

The documentation crisis: why data dictionaries matter

Ask any data professional what they want first when integrating new data.

It’s not a dashboard. It’s not a tool.

It’s a data dictionary.

Documentation that clearly explains what tables represent, what fields contain, and what values typically appear is the difference between confident analysis and dangerous guessing.

Without documentation, assumptions multiply. A field that looks like text might actually contain geo-IDs. Someone queries it incorrectly. That error cascades through reporting. Decisions get made off faulty outputs. And the issue isn’t discovered until someone notices results that “feel off.”

Here’s the second layer most organizations miss: documentation is only as good as governance that keeps it current. Stale documentation creates false confidence, which is worse than no confidence.

Data management maturity is real—and technical debt is inevitable

Data management maturity varies widely by company size, history, and how quickly the organization has scaled.

Startups evolve methods as they learn. Established organizations tend to have more structured processes—but also more legacy artifacts.

Every database contains the story of how it was built:

- Different people

- Different skill levels

- Different organizational priorities

- Different “temporary” decisions

Over time, that accumulated history becomes technical debt you have to manage—especially when you’re trying to unify data across platforms.

The path to better integration

The organizations that improve data management outcomes don’t just “implement a platform.” They build an operating model.

They invest in:

- Comprehensive governance that balances structure with flexibility

- Living documentation (including data dictionaries) that stays current

- Cross-functional transparency so teams don’t build in the dark

- Strategic planning before migrations expose every hidden assumption

- Expertise that understands data management as people + process + technology

The most valuable expertise isn’t just knowing a platform. It’s knowing how data works together in complicated environments—and how to structure it so it stays usable as the organization changes.

The diagnostic questions IT Directors should ask before making platform decisions

Before selecting the next tool, the smarter move is to get brutally honest about current state.

Ask questions like:

- What systems are we integrating today—and where are the known breaks?

- Do we have a shared data dictionary and standardized definitions? If not, what’s the plan to create and maintain one?

- Where does governance get bypassed—and why?

- Who owns documentation, and how do we ensure it stays current?

- What technical debt are we about to uncover if we migrate?

- Do teams have visibility into each other’s work—or is knowledge siloed?

These questions don’t just prevent bad purchases. They prevent disappointment after good purchases.

Moving forward: from chaos to control

Data management doesn’t have to be chaotic. But solving it requires more than buying tools.

Organizations can spend millions on CDPs, cloud warehouses, and analytics platforms. The demos can look flawless. The ROI projections can be irresistible. And the reality can still be fragmentation—unless integration, governance, documentation, and communication are treated as first-class work.

Because the real outcome isn’t “better reporting.”

It’s better operations. Faster decisions. Cleaner execution. More confidence. The ability to act on opportunities competitors can’t see through fragmented data.

That’s what strong data management buys you: competitive advantage.

About Tandem Theory

At Tandem Theory, we approach data management the same way we approach any serious growth and performance initiative: as an operating system, not a toolset. That means diagnosing current-state reality, building governance and documentation that can survive urgency, and creating cross-functional transparency so systems—and teams—can actually work together. If you’re ready to move beyond patchwork fixes, reduce risk during migrations, and turn fragmented data into a durable advantage your organization can scale on, we’d love to help.